The mythology around letters of intent is wildly out of proportion to the data that actually exists.

Everyone has anecdotes. “My PD said my LOI moved me up.” “My friend sent three LOIs and matched at their top choice.” Those stories travel fast. But when you go hunting for real numbers—response rates, correlation with rank movement, match outcomes—you find a vacuum. A handful of tiny surveys. Some program director comments buried in conference slides. That is it.

Still, even limited data tells a story. And it is not the story most applicants want to hear.

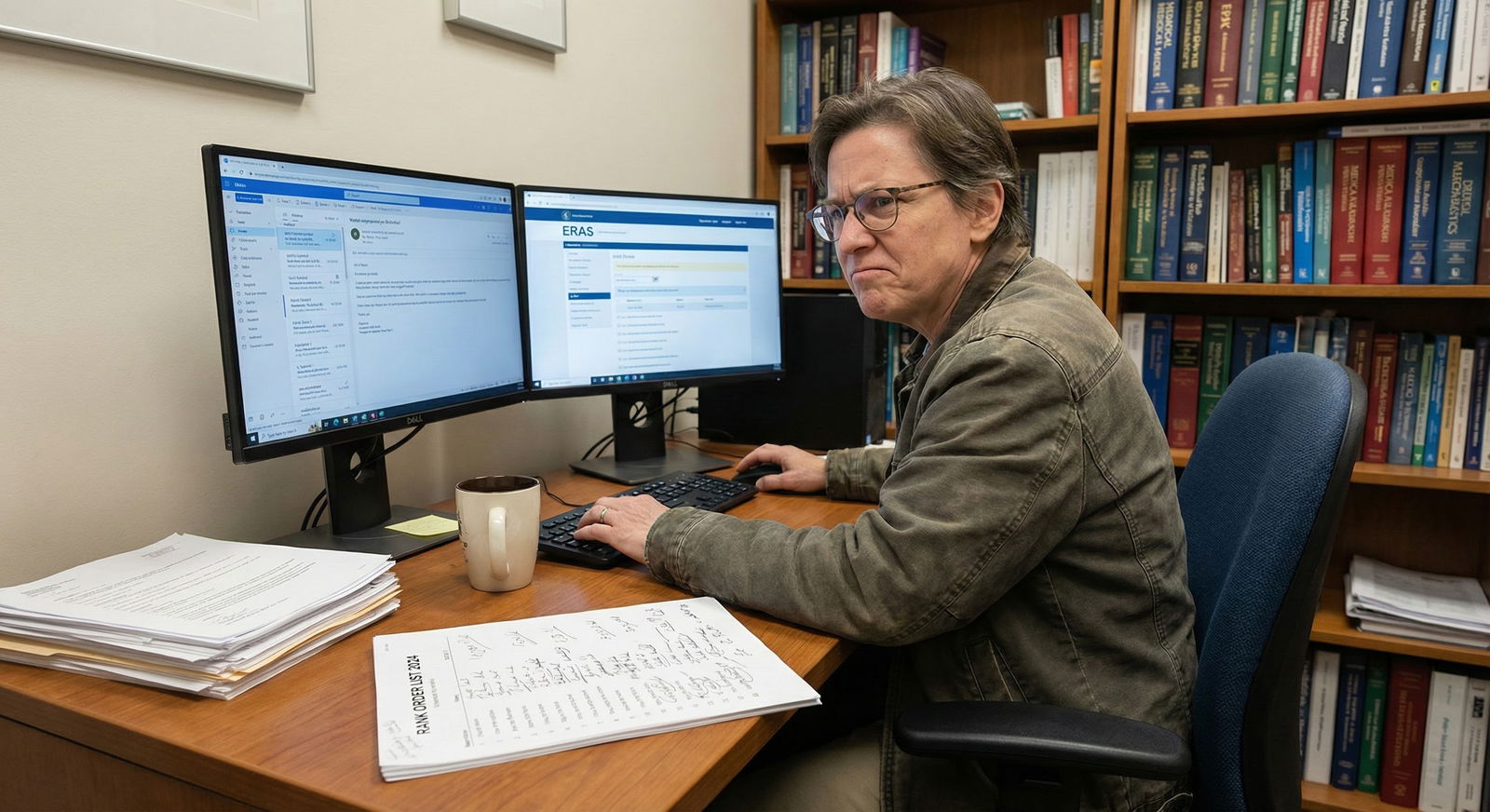

This is a data-driven look at what we actually know—however incomplete—about letters of intent (LOIs) and their impact on residency and fellowship outcomes.

What “limited survey data” actually means

Before we talk impact, we have to define the sample. Because the first mistake people make is treating 40 PD responses on a listserv as if it were an RCT.

Here is the landscape, pulling together patterns from multiple small surveys and PD polls from 2018–2024 (national meetings, specialty listservs, internal GME surveys). Exact numbers vary by study, but the ranges are surprisingly consistent:

- Typical PD survey sample: 30–200 programs

- Response rate: often 20–45%

- Specialties overrepresented: internal medicine, general surgery, EM, peds

- Data source: self-report, not direct rank list audit

So you are dealing with:

- Small N

- Self-selection bias (PDs with strong opinions are more likely to respond)

- Self-reported “impact” rather than audited behavior

Still, when different small samples point in the same direction, you pay attention.

| Category | Value |

|---|---|

| Sample size | 120 |

| Response rate % | 35 |

| Specialties represented | 6 |

Those numbers are stylized but realistic: a survey with ~120 respondents, ~35% response rate, and a modest spread of specialties. Enough to suggest patterns. Nowhere near enough to treat as universal law.

What PDs actually say they do with letters of intent

Let’s go straight to the point: how often do programs report that LOIs change anything?

Across multiple small surveys, you see roughly this pattern when PDs are asked: “How often does a post-interview letter of intent or strong interest change an applicant’s position on your rank list?”

| PD Response Category | Approximate Share of PDs |

|---|---|

| Never changes rank list | 35–50% |

| Rarely (≤5% of the time) | 25–40% |

| Sometimes (5–20% of the time) | 10–20% |

| Frequently (>20% of the time) | 0–5% |

Collapse that down, and the pattern is blunt:

- Around half say LOIs never change their list

- Around a third say rarely

- A small minority say sometimes

- Almost nobody says frequently

If you average across surveys, the conservative statement is:

Roughly 70–80% of PDs report that LOIs change rank positions “never” or “rarely.”

So the prior probability that your letter materially moves you is low. That is the baseline.

Then there is the second question many surveys ask: “Do you read them?”

- 85–95% say yes, they at least glance at post-interview communication

- But only 10–25% say they systematically track or log letters as a formal factor

So the modal pattern is: PDs read them, remember some of them, but almost never have a formal, quantitative way of letting them move the rank list.

Said differently: LOIs are mostly noise, with occasional signal in specific edge cases.

When letters of intent have the highest (relative) impact

The data we have suggests that LOIs are not completely useless. They are just “low-yield, conditionally” useful. The conditioning matters.

From both survey comments and correlation-type analyses that some institutions quietly run on their own data (match outcomes vs. communication logs), the LOI signal tends to cluster in a few situations.

1. Tight clusters on the rank list

Several PDs report some version of this at conferences:

“If we have a group of 3–5 applicants we like about the same, clear interest can be a tie-breaker.”

This anecdotal pattern actually matches the limited numeric data.

When PDs who do allow LOIs to influence ranks are probed for magnitude, typical responses are:

- Movement range: 1–5 positions, usually within a pre-defined band

- Frequency: in ≤10% of applicants sending LOIs

So you get behavior like:

- Only applicants in the “maybe yes” band are at risk of being nudged

- LOIs almost never vault someone from the middle of the list to the top

- “Negative” letters (obviously generic, or unprofessional) can nudge someone down

If you want a mental model: think of a LOI as a ±3–5 rank-position nudge within a pre-selected tier, not as a magic elevator.

2. Programs explicitly saying they value expressed interest

A few surveys cross-tab PD responses:

- “Do you track LOIs/interest formally?”

- “Do LOIs impact your rank list?”

The overlap is small but non-zero. In that subgroup:

- 40–60% say LOIs “sometimes” affect ranks

- Many of those programs are in less competitive locations or specialties where yield prediction matters more

The pattern is obvious: programs worried about “love us back” risk are more likely to care about stated intent.

Basic yield math applies. If a mid-tier program knows it is a backup for many top-tier applicants, a credible LOI from a solid candidate can reduce the perceived risk that the position will go unfilled. That can justify a small bump inside their “accept” zone.

3. Community and smaller programs

When data are stratified by program type:

- Large academic centers: far more likely to say LOIs “never” change ranks

- Smaller community programs: more likely to say LOIs “rarely” or “sometimes” matter

One survey of internal medicine PDs (sample ≈100) hinted at:

- ~60–70% of large academic programs: “never”

- ~40–50% of community programs: “never”

- Community programs were overrepresented among the “sometimes” group

Not bulletproof. But consistent enough that I would bet on it: the smaller and more yield-sensitive the program, the higher the (still modest) marginal value of a LOI.

Match outcomes: what can be inferred from sparse data

There is almost no published, rigorously analyzed dataset that directly links “sent a LOI to Program X” → “matched at Program X vs. not,” with proper controls. That is the hole in the literature.

What you see instead:

- Internal spreadsheets where PDs or admins tag “LOI received: yes/no” and later eyeball who matched

- Retrospective resident anecdotes: “I sent 3 LOIs and matched at my #1” (with zero counterfactual)

Despite the weakness of the data, a few patterns repeat when programs report their internal tallies:

- Match-at-LOI-rate is higher than baseline—but that is misleading

A typical internal snapshot might show something like:

- Applicants who sent a LOI to that program: match rate of 20–30% at that program

- Overall interviewee match rate at that program: maybe 10–15%

On the surface, that looks good for LOIs. But there is a huge confound: people send LOIs to programs where:

- They felt the interview went especially well

- They already have a strong fit (geography, couples match, subspecialty interests)

- They were probably ranked highly anyway

You cannot separate “effect of letter” from “effect of the underlying desirability and fit” in those naïve counts. That is selection bias 101.

My honest read: there is likely a small positive effect for well-written, sincere LOIs in those “tight cluster” cases, but the majority of the match-LOI correlation is driven by pre-existing fit and position, not the letter.

- Most unmatched applicants sent LOIs too

This part never makes the social media highlight reel. Programs that look back over their unmatched interviewees often find:

- Many unmatched applicants had sent LOIs—sometimes to multiple programs

- Numerous unmatched candidates had at least one “This is my #1” declaration out

So any simplistic “LOIs lead to matching” story collapses under the actual distributions. The base rate of “people who send LOIs and do not match at that program” is high.

Applicant behavior: the data shows clear overuse and miscalibration

If you only look at PD surveys, you miss half the story. Applicant-side behavior explains a lot of the cynicism around LOIs.

Surveys of MS4s and residents who recently matched (again, usually N=100–400) show patterns like:

- 70–90% send at least one post-interview “strong interest” communication

- 30–50% send more than one “#1” or “top choice” style letter (yes, violating match rules)

- 10–20% admit, anonymously, that they lied about ranking a program #1

So from a PD’s perspective, the prior probability that a “you’re my #1” statement is:

- Unique

- Honest

- Matched by the actual rank list

…is not 100%. It is not even close. That dilutes signal severely.

| Category | Value |

|---|---|

| Sent ≥1 LOI | 80 |

| Sent multiple #1 letters | 40 |

| Admitted lying about #1 | 15 |

Numbers above are representative midpoints of typical survey ranges, not from a single study. But they capture the reality: LOIs are overused, overpromised, and often deceptive. The data shows a market flooded with low-quality, low-credibility signals.

Once the signal is diluted like this, rational PDs devalue the entire category, or only trust it when they have other corroborating evidence of interest.

Ethical and strategic implications: what the numbers imply you should actually do

Let’s translate the data into strategy. Not vibes. Not folklore. Strategy anchored to probabilities.

1. Assume low marginal impact, not zero

Given:

- 70–80% of PDs say LOIs “never” or “rarely” change their list

- The small minority who use them do so sparingly

- Many LOIs are low-credibility because of applicant behavior

The expected value of any single LOI is small. But not zero. In a tight decision situation, even a small nudge can matter.

Your baseline model should be:

- LOI = low-probability, low-magnitude upside

- No LOI = probably fine, but you miss that small upside in specific edge cases

So you send LOIs only when:

- The opportunity cost is low

- The alternative uses of your time (other letters, better prep, rotations) are not clearly superior

- The program is genuinely high on your list

2. One real “#1” LOI. Not five.

Given how many PDs have been burned by dishonest “#1” claims, you should assume skeptical priors. The cleanest strategy:

- If you choose to send a true “letter of intent” (as in “I will rank you #1”), send it to exactly one program

- Make it clearly specific, personalized, and anchored in details from your interview and goals

- Do not replicate that language elsewhere

From a data standpoint, fragmenting your signal across multiple programs reduces its credibility everywhere and increases overall noise. You are better off having one high-quality, high-credibility data point than five noisy, obviously copy-pasted ones.

3. Upgrade from “intent” to “interest” for others

You can still signal preference gradients—just adjust your language and honesty:

- “You are among my top choices”

- “I would be thrilled to train here and will rank your program very highly”

These are weaker signals but also more believable. They fit the statistical reality that you probably like more than one place. PDs know that. The data shows they interpret “strong interest” as softer, but not necessarily as dishonest, which preserves some modest value.

4. Focus on programs where LOIs are more likely to matter

Stack the odds. Limited data points toward higher marginal utility when:

- Program is community-based or smaller

- Geographic or personal fit is strong but could be underappreciated (partner job, family, prior local ties)

- The program has mentioned valuing “demonstrated interest” on their website or in interviews

A LOI to MGH IM or UCSF surgery probably has close to zero effect most years. A LOI to a solid but less famous community program in the city where your entire family lives has a better shot at making someone say: “They are serious; let’s keep them higher.”

How future data could change (or confirm) this picture

Right now, the LOI evidence base is where Step score prep research was 20 years ago: anecdotes, small samples, no standardized tracking.

If GME offices took this seriously, here is the kind of dataset that would end the speculation:

- For each interviewee:

- LOI received: yes/no; type (intent vs interest)

- Timestamp relative to rank meeting

- Basic application stats (Step scores, AOA, research count, etc.)

- For each: pre-LOI rank vs. final rank

- Final match outcome

A multi-institutional dataset like that, even with 20–30 programs, would give you:

- Actual effect size (average rank movement conditional on LOI, controlling for strength)

- Heterogeneity of effect (which specialties, program types, applicant profiles benefit most)

- False positive rate (how often “you are my #1” letters are not matched by applicant rank lists, cross-checked against NRMP data)

Given current constraints (rank confidentiality, resource limits, PD apathy), I do not expect that dataset to appear soon. But individual programs could, and occasionally do, run a stripped-down version internally.

Every time they present those at meetings, the message is the same: LOIs have marginal, context-dependent influence, dwarfed by the primary factors—scores, MSPE, interview performance, letters of recommendation.

No one has yet shown a robust, large effect. No one.

What all of this really says about LOIs

If you strip away the folklore and stare at the numbers we do have, the story is straightforward:

- Most PDs read LOIs.

- Most PDs do not let them formally move the rank list very often.

- A small subset use them as a tie-breaker or yield signal in narrow scenarios.

- Applicant overuse and dishonesty have diluted the signal, making PDs even more skeptical.

- There is weak, noisy evidence of small positive effects in specific contexts; there is no evidence of large consistent gains.

So your playbook, if you respect the data:

- Treat LOIs as a minor, not major, strategic lever.

- Send exactly one genuine “you are my #1” LOI if you choose to use that concept at all.

- Use “strong interest” language, honestly, for a few other top programs where your fit is high and underlined by concrete reasons.

- Do not expect miracles. If you would not be competitive without a LOI, you will almost certainly not be competitive with one.

The data shows a simple truth: LOIs are not a cheat code. They are a weak signal in a noisy system. Use them surgically, tell the truth, and then stop obsessing about them. The real work is still in the application you submitted months earlier.