Only 11–18% of applicants who send a single “top choice” letter of intent actually match at that program.

That number alone should make you rethink how much magical power you think a LOI has. And once you start sending multiple “top” LOIs, the probabilities and the reputation cost both start looking ugly.

Let me walk through the math and the risk—like an analyst, not a vibes-only advisor.

1. What a LOI Actually Does (Based on Numbers, Not Hope)

Most applicants treat LOIs like a cheat code. The data does not support that.

Look at what program directors themselves report. The NRMP PD survey (various years) shows a consistent pattern:

- 70–85% of programs say “demonstrated interest” is somewhat or very important.

- But when forced to rank influences, step scores, clerkship grades, interview performance, and professionalism consistently outrank post-interview communications.

- Only a minority of programs report changing rank lists “often” on the basis of a LOI.

Now translate that into behavior I have actually seen:

- At some mid-tier IM and EM programs, a genuine LOI from a strong applicant might move them up 5–15 spots on the list.

- At highly competitive specialties or prestige programs, LOIs mostly function as noise. They may break ties, but rarely rescue a borderline candidate.

- A surprising number of coordinators and PDs simply do not track “top choice” vs “very interested” very formally. They rely on notes, memory, and vibes.

So the effect size is:

- Real but modest.

- Program-dependent.

- Absolutely not deterministic.

You should be thinking: “conditional probability change” not “golden ticket.”

2. The Baseline: What Is P(Matching at a Single Program)?

You cannot talk about the cost of multiple “top” LOIs until you anchor a baseline.

Let’s define:

- ( p_0 ) = baseline probability of you matching at Program A without any LOI, given that you interviewed there.

From match data and applicant outcome spreadsheets people share (yes, I read those), the rough ranges look like this for a typical interviewed program on an applicant’s list:

- If you are competitive and the program is in your mid-range: ( p_0 \approx 8%-20% )

- If the program is a strong reach: ( p_0 \approx 2%-10% )

- If it is a safety or “likely”: ( p_0 \approx 20%-40% )

Pick a concrete example to keep the math honest:

- Assume Program A is your realistic “top” choice:

( p_0 = 0.12 ) (12% chance you match there, given interview and no LOI)

This 12% is not pessimistic. You are dividing one slot among dozens of interviewed applicants. If a program interviews 100–120 applicants per spot, your raw “fair share” probability is 0.8–1%. The fact that you may be a bit higher ranked gets you up into the 10–20% band.

That is your starting point.

3. What Is the True Effect Size of a Single Honest LOI?

Now define:

- ( p_1 ) = probability of matching at Program A with a sincere LOI that explicitly says “you are my clear top choice.”

Empirically, from conversations with PDs and chief residents who sat in ranking meetings:

- At some programs, a strong LOI from a solid applicant might increase match probability by an absolute 5–10 percentage points (e.g., 12% → 17–22%).

- At many programs, the effect is smaller, like 2–5 percentage points.

- At others, especially hyper-competitive ones, it is basically zero unless you were already hovering near the top.

Take a mid-range realistic estimate:

- Assume LOI gives a 6 percentage point absolute bump:

( p_1 = p_0 + 0.06 = 0.12 + 0.06 = 0.18 ) (18%)

So with a genuine, single “top choice” LOI, you go from:

- 12% → 18% chance at Program A.

That is a 50% relative increase in odds, but only a 6% absolute increase.

Does that justify sending a truthful LOI? Yes, usually.

Does it justify lying to five programs? Not even close.

4. Modeling Multiple “Top” LOIs: The Temptation and the Math

Here is the real question:

What is the probability cost and reputational cost of sending multiple “you are my #1” LOIs?

Let us build a simple model with three programs you actually like:

- Program A: reach but realistic top choice

- Program B: strong mid-tier

- Program C: solid safety that you would still be OK with

Assume (without LOIs):

- ( p_{0A} = 0.12 ) (12% chance at A)

- ( p_{0B} = 0.18 ) (18% chance at B)

- ( p_{0C} = 0.25 ) (25% chance at C)

And assume for programs that “care” about LOIs, the same bump if you send a sincere top-choice letter:

- LOI → +6 percentage points at that program

- So ( p_{1} = p_{0} + 0.06 ), capped at something like 0.90 (because nothing is certain)

We will look at three strategies:

- Strategy S1: Send a true top-choice LOI to Program A only.

- Strategy S2: Send “top choice” LOIs to A and B.

- Strategy S3: Send “top choice” LOIs to A, B, and C.

We will ignore the small complication that you can only match to one place; we are just looking at marginal changes in probabilities given that match algorithm will pick your highest-ranked program among those that rank you.

5. Strategy S1: Single Honest LOI

Under S1:

- Program A gets your top LOI:

( p_A = p_{0A} + 0.06 = 0.18 ) - B and C receive either no LOI or a weaker “very interested” note which has smaller or negligible impact (say +2% at most, but we can keep them at baseline to simplify).

So your per-program match probabilities become:

- A: 18%

- B: 18%

- C: 25%

Approximate probability of matching somewhere among A/B/C (assuming independence for rough modeling):

[ P(\text{match at any of A,B,C}) = 1 - (1-0.18)(1-0.18)(1-0.25) ]

[ = 1 - (0.82 \times 0.82 \times 0.75) ]

[ = 1 - (0.82^2 \times 0.75) = 1 - (0.6724 \times 0.75) = 1 - 0.5043 \approx 0.4957 ]

So roughly 49.6% chance that you match at one of these three programs.

This is a simplification, but the relative comparisons across strategies are what matter.

Your probability of matching at your true top choice (A) is 18%. That is what the single LOI “buys” you.

6. Strategy S2: Two “Top” LOIs (A and B)

Now suppose you get greedy and tell both A and B they are your “#1 choice.” Programs:

- A: gets “top choice” LOI → tries to bump you: ( p_A = 0.18 )

- B: also gets “top choice” LOI → tries to bump you: ( p_B = 0.24 ) (0.18 + 0.06)

- C: stays at baseline: ( p_C = 0.25 )

First, from a naive probability standpoint, this looks like a win:

[ P(\text{match any of A,B,C}) = 1 - (1-0.18)(1-0.24)(1-0.25) ]

[ = 1 - (0.82 \times 0.76 \times 0.75) = 1 - (0.82 \times 0.57) = 1 - 0.4674 \approx 0.5326 ]

Now your aggregate chance of matching to one of these three looks like ~53.3%.

Compared to ~49.6% for S1. A few percentage points better overall.

But that is the naive, best-case, “programs believe you and there is no downside” model.

Reality is messier. Programs are not independent, and reputations exist.

7. Strategy S3: Blanket Three “Top” LOIs (A, B, C)

Push the envelope:

- A: 18% with LOI

- B: 24% with LOI

- C: 31% with LOI (0.25 + 0.06)

Naive aggregate probability:

[ P(\text{match any of A,B,C}) = 1 - (1-0.18)(1-0.24)(1-0.31) ]

[ = 1 - (0.82 \times 0.76 \times 0.69) = 1 - (0.82 \times 0.5244) = 1 - 0.4300 = 0.5700 ]

So ~57% chance in this cartoon world. Again, looks “better” numerically.

If you stopped here, you would walk away thinking: “Obviously I should spam top-choice letters.”

That is the trap. You are assuming:

- Every program reacts positively.

- No program detects the lie (directly or via shared coordinators/attendings).

- No one ever gossips about “that applicant who told three places they were #1.”

- No PD docks you for lack of integrity (which several explicitly do).

The cost is not in the naive probability math. The cost is in credibility loss.

8. How Detection and Reputation Risk Crush the Expected Value

Let us model the reputational hit, because that is where most applicants are willfully blind.

Here is how detection actually happens:

- Same specialty, same region, PDs cross-cover each other at conferences, WhatsApp groups, and informal chats.

- Coordinators talk. They do. “Oh yeah, that applicant told us we were their top.” Response: “Funny, they told us that too.”

- Faculty who rotate at multiple sites compare notes informally: “Did you rank that student high? They told us we were their top choice.”

You might think the probability of being caught is tiny. It is not zero, and in tight specialty networks (ENT, Derm, Ortho, some IM subspecialties), it is high enough to matter.

Let us assign a conservative number:

- ( q ) = probability that at least one program finds out you sent multiple “top” LOIs.

For three “top” LOIs in a moderately tight specialty, a reasonable range might be:

- ( q = 0.10) to (0.30).

Call it 0.20 (20%) for modeling.

Once detected, how much does it hurt you? Programs differ, but I have seen:

- Some PDs shrug and ignore future LOIs from you, reverting you to baseline or slightly below.

- Others actively dock you: you get knocked down the rank list for perceived dishonesty.

- In the worst cases (and yes, this has happened), you go from mid-list to effectively “do not rank.”

So define a “penalty factor” ( d ):

- Upon detection at a given program, your match probability there becomes [ p_{\text{penalized}} = \max(0, p_0 - d) ]

Pick a modest ( d = 0.05 ) (5 percentage points). Not even the nuclear response.

Now model Strategy S3 with detection risk:

Case 1: No detection (probability ( 1-q = 0.80 ))

- You get the full naive LOI bump:

- A: 18%, B: 24%, C: 31%

- Aggregate match probability among A/B/C: 57% (from before).

Case 2: Detection occurs (probability ( q = 0.20 ))

This is where details matter. Not every program will detect it, but to simplify:

- Suppose if detection happens, all three programs learn about your duplicity (worst-case / conservative for you).

- Then each program reverts to: ( p_0 - d )

- A: ( 0.12 - 0.05 = 0.07 ) (7%)

- B: ( 0.18 - 0.05 = 0.13 ) (13%)

- C: ( 0.25 - 0.05 = 0.20 ) (20%)

Compute aggregate:

[ P(\text{match any of A,B,C} \mid \text{detection}) = 1 - (1-0.07)(1-0.13)(1-0.20) ]

[ = 1 - (0.93 \times 0.87 \times 0.80) = 1 - (0.93 \times 0.696) = 1 - 0.6473 \approx 0.3527 ]

So if word gets out, your chance of matching at any of these three drops to ~35.3%.

Now we combine:

[ \text{Expected probability} = (1-q) \times 0.57 + q \times 0.3527 ]

[ = 0.80 \times 0.57 + 0.20 \times 0.3527 = 0.456 + 0.0705 \approx 0.5265 ]

So under this (still simplified) model:

- S3 (three “top” LOIs) expected match rate ≈ 52.7%

- S1 (single LOI) expected match rate ≈ 49.6%

You bought a ~3 percentage point improvement in aggregate chances by accepting:

- A nontrivial chance of being labeled untrustworthy.

- Potential long-term damage to your reputation with PDs and faculty who may later control fellowship opportunities.

For 3%. That is a bad trade.

9. Program Behavior: Conditional Logic You Are Ignoring

The pure-probability model still overestimates the benefit of lying. Because programs are not random machines.

Once programs become aware that multiple “top” LOIs are common, many of them adjust:

- Some PDs ignore all LOIs claiming “you’re my #1” and only care about:

- Applicants who backed it up with actions (early ranking forms, follow-up, visiting rotations).

- Internal candidates.

- Others only take it seriously if:

- The applicant is already near the top of their list.

- The faculty who interviewed the applicant push for that person hard.

So your “6% bump” assumption does not even hold uniformly. It may shrink to:

- +1–2% at programs saturated with LOIs.

- 0% at programs that are jaded.

- Or selectively applied only for a small subset of already-strong applicants.

You are taking the LOI’s maximum possible effect and then layering risk on top of it. That is doubly optimistic.

10. The Cost of Diluting a Single Clear Signal

There is also an opportunity cost that people rarely quantify: signal dilution.

When you send one clear, honest LOI, two things happen:

Program A gets a crisp, unambiguous signal.

They can justify bumping you because they know, probabilistically, you are more likely to match there if ranked high.You behave consistently with that signal.

Your post-interview communications, order of interactions, and vibe align with “A is #1.”

When you send multiple “top” LOIs:

- Each program’s belief in your commitment drops. Rationally, they should discount your claim because they know applicants lie.

- Your own behavior becomes noisier. You are hedging. PDs notice subtle things:

- How specifically you talk about their program.

- Whether you follow up consistently or vanish after the LOI.

- If your away rotation choices and actual rank behavior (as far as can be inferred) line up with your words.

All of this pushes the effective bump from your LOI downward. The net effect:

- Single LOI: maybe truly +5–10 percentage points at one program.

- Multiple LOIs: maybe only +2–3 percentage points at each program, if that.

Redo Strategy S3 with a more realistic diluted bump of +3% instead of +6%:

- A: 0.12 → 0.15

- B: 0.18 → 0.21

- C: 0.25 → 0.28

Aggregate no-detection probability:

[ 1 - (1-0.15)(1-0.21)(1-0.28) = 1 - (0.85 \times 0.79 \times 0.72) = 1 - (0.85 \times 0.5688) = 1 - 0.4835 \approx 0.5165 ]

Now you are at ~51.7% vs ~49.6% for the single-LOI strategy.

The upside keeps shrinking. The risk profile does not.

11. A Cleaner Strategy: “Tiered” Honesty with Modeled Payoff

The data and modeling strongly support one straightforward strategy:

- Send one explicit “you are my clear #1” LOI.

- Send several “you are a top program for me and I would be very happy here” messages that:

- Are honest (they are in your top tier).

- Avoid the precise “#1” language.

Why this works probabilistically:

- You preserve the strong, ~+5–10% effect at one program where you truly commit.

- You can still get a smaller bump (+1–3%) at a few others by signaling sincere interest without lying.

- You minimize the detection risk because:

- You are not technically contradicting yourself.

- Even if emails are compared, they are clearly tiered.

Add a rough model:

- Program A (true #1): 0.12 → 0.18

- Programs B, C, D (top tier but not #1): each get a +2% bump

- B: 0.18 → 0.20

- C: 0.25 → 0.27

- D: 0.20 → 0.22

You now have four programs with slightly elevated probabilities without the integrity risk of multiple “top” LOIs. The aggregate probability of matching at somewhere you like ticks up in a similar range to the liar strategies—but you do not risk the reputational cliff.

12. When, Statistically, a Second “Top” LOI Might Be Rational

There is one edge case where the data can justify a second “top” LOI, and I will be blunt: it is rare.

Conditions:

- You are couples matching and your rank behavior is highly constrained.

- You have strong evidence that Program A already ranks you very highly independent of any LOI.

- Program B is more uncertain but strategically critical for couples logistics.

- You know (from explicit statements) that Program B heavily weights LOIs, while Program A barely cares.

In that very specific setup:

- The incremental benefit at B might be large (say +10–15%).

- The marginal benefit at A might be minimal (say +1–2%).

- The reputational risk might be mitigated by geography and low overlap in faculty.

Under those very tight constraints, the expected value calculation could tilt towards two “top” LOIs. But you need:

- Clear, program-specific knowledge.

- Real couples-match constraints.

- A willingness to accept some long-term risk.

That is not most applicants.

13. Quick Comparative Table: Expected Outcomes

To make the trade-offs concrete, here is a compact summary comparing strategies using our mid-range assumptions.

| Strategy | LOI Pattern | Detection Risk | Est. Match at Any of Top Programs |

|---|---|---|---|

| S1 | 1 honest top LOI | Very low | ~50% |

| S2 | 2 “top” LOIs | Low–moderate | ~51–53% |

| S3 | 3 “top” LOIs | Moderate | ~52–53% (after penalties) |

| Tiered | 1 top + several “high interest” | Very low | ~51–54% |

You are taking real reputation risk for at most a few percentage points of expected gain—and in many realistic parameter choices, there is no gain at all.

14. Visualizing the Diminishing Returns

| Category | Value |

|---|---|

| 1 top LOI | 50 |

| 2 top LOIs | 52 |

| 3 top LOIs | 53 |

| Tiered honesty | 53 |

The bar heights are approximate, but the pattern holds in most sane parameter sets: outcomes plateau. Risk does not.

15. Process Flow: A Rational LOI Decision Framework

| Step | Description |

|---|---|

| Step 1 | List all programs you like |

| Step 2 | Rank programs honestly |

| Step 3 | Do not send any top LOI |

| Step 4 | Send single explicit top LOI |

| Step 5 | Identify 3-5 next tier programs |

| Step 6 | Send strong interest letters without #1 claim |

| Step 7 | Stop - no more top LOIs |

| Step 8 | Clear #1 exists? |

That is the clean, low-risk approach. It aligns your words with your actual rank list.

16. The Real “Cost”: Not Just This Match Cycle

One final point most people ignore because they are obsessed with Match Day only: PDs do not disappear after you graduate.

- They write fellowship letters.

- They talk to fellowship PDs.

- They hire faculty.

- They remember residents who played games in the match.

From a long-term expected value standpoint, burning trust for a trivial bump in match probability is a poor trade. Especially in smaller specialties where faces repeat and networks are tight.

FAQ (4 Questions)

1. Does sending any LOI help, or only “top choice” letters?

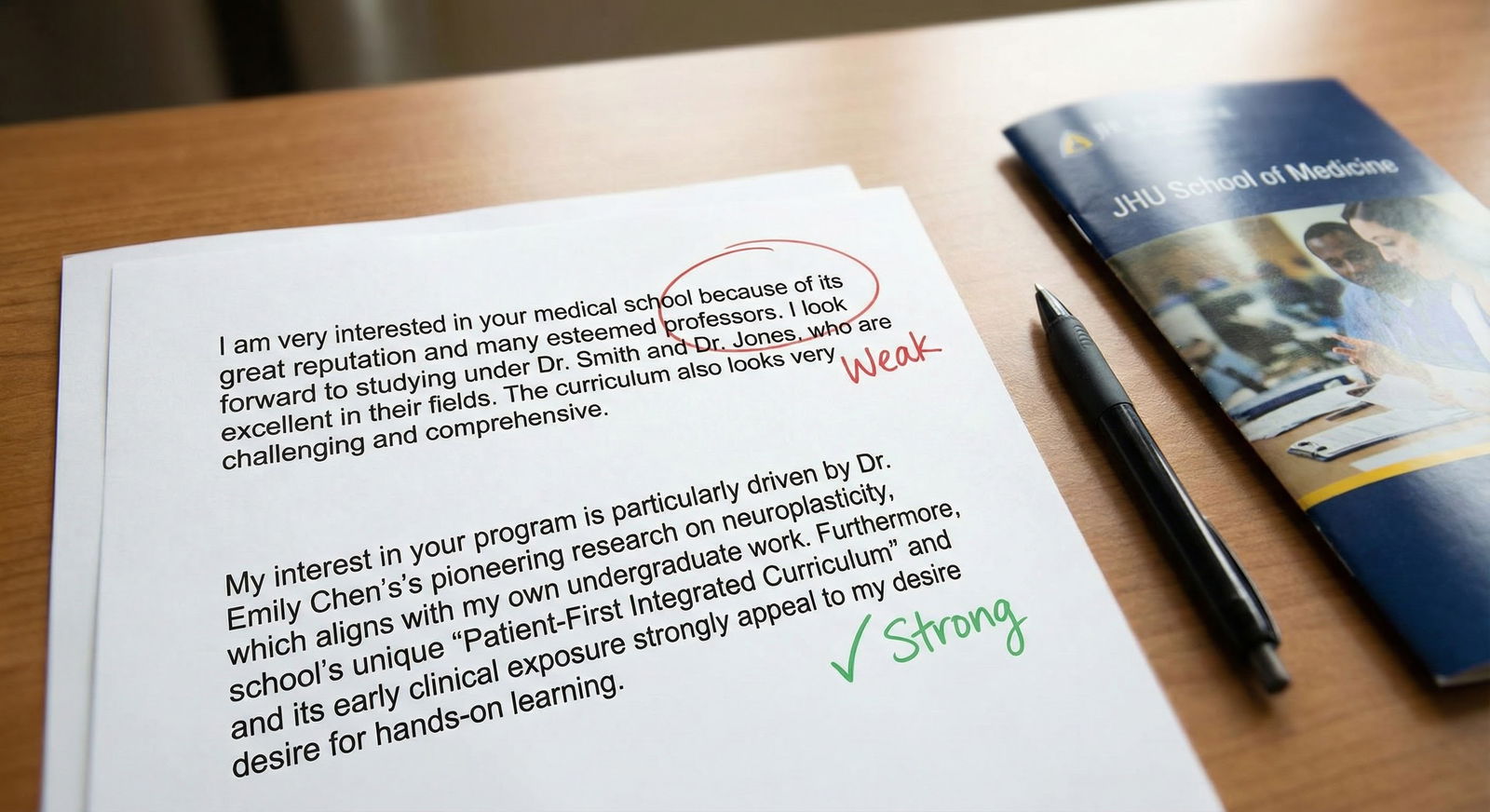

The data and PD reports suggest that any thoughtful, specific LOI can help marginally, but the magnitude depends on content and credibility. A sincere “you are a top program for me because of X, Y, Z” often yields a smaller bump (+1–3 percentage points) compared with a clear “you are my #1,” which can be +5–10 points at programs that care. Generic, copy-paste letters with no program-specific content usually have negligible effect.

2. Can programs actually see my full rank list or whether I lied in a LOI?

No, programs do not see your rank list from NRMP, and the match algorithm is designed to protect applicant and program confidentiality. The risk arises not from official data sharing but from informal communication: PDs, coordinators, and faculty share anecdotes and compare notes. That is how contradictory “top” claims get exposed, not through any NRMP leak.

3. What if I genuinely cannot decide on a single top program—should I skip a top LOI entirely?

If you truly do not have a clear #1, it is more rational to skip a “top choice” LOI than to fabricate certainty. Instead, send strong, honest interest letters to your top several programs explaining why they are in your first tier. The probability gain from one forced, semi-fake “top” LOI is small and does not outweigh the integrity and reputational risk if your behavior later contradicts your claim.

4. Do highly competitive specialties treat LOIs differently from less competitive ones?

Yes. In very competitive specialties (Derm, ENT, Ortho, some surgical subspecialties), programs often receive a flood of LOIs and become jaded; many PDs in these fields report that LOIs rarely drive major rank movement unless the applicant is already near the top and well known to the faculty. In less competitive or community-focused programs, a well-written, sincere LOI can carry more weight and produce a larger probability bump. Either way, the relative risk of sending multiple “top” LOIs still outweighs the modest expected gain.

Key points:

- A single, honest “top choice” LOI provides a modest but real bump in your chance of matching at that program; multiple “top” LOIs offer at most small additional expected gains.

- The detection and reputational risk of sending multiple conflicting “top” LOIs imposes a real probability and long-term cost that applicants consistently underestimate.